So, have you ever tried to make sense of a ton of data? It’s like staring at a messy closet. You know there’s something good in there, but where do you even start?

Este blog ofrece contenido únicamente con fines informativos, educativos y de reflexión. La información publicada no constituye consejo médico, psicológico ni psiquiátrico, y no sustituye la evaluación, el diagnóstico, el tratamiento ni la orientación individual de un profesional debidamente acreditado. Si crees que puedes estar atravesando un problema psicológico o de salud, consulta cuanto antes con un profesional certificado antes de tomar cualquier decisión importante sobre tu bienestar. No te automediques ni inicies, suspendas o modifiques medicamentos, terapias o tratamientos por tu cuenta. Aunque intentamos que la información sea útil y precisa, no garantizamos que esté completa, actualizada o que sea adecuada. El uso de este contenido es bajo tu propia responsabilidad y su lectura no crea una relación profesional, clínica ni terapéutica con el autor o con este sitio web.

Enter the Classification and Regression Tree, or CART for short. Sounds fancy, huh? But really, it’s just a clever way to break things down into smaller, more manageable pieces.

You’ve got your decisions and predictions all wrapped up in one neat little package. Whether you’re trying to predict customer behavior or sort out your friend group’s Netflix preferences, this tool can help.

So let’s unpack it together. You’ll see how these trees can grow and branch out to give you some clear answers when the data feels overwhelming! Are you with me?

Key Concepts and Applications of Classification and Regression Trees: A Comprehensive PDF Guide

Sure! Let’s talk about Classification and Regression Trees, or CART for short. So, think of it like a decision-making tree for sorting information or making predictions. It’s super cool stuff that has a variety of applications, whether you’re looking to predict outcomes or classify data.

What are CART?

At its core, CART takes your data and organizes it in a tree-like structure. Imagine a tree where each branch represents a choice based on specific criteria. You start at the top (the root) and move down to the leaves based on answers to yes-or-no questions about your data.

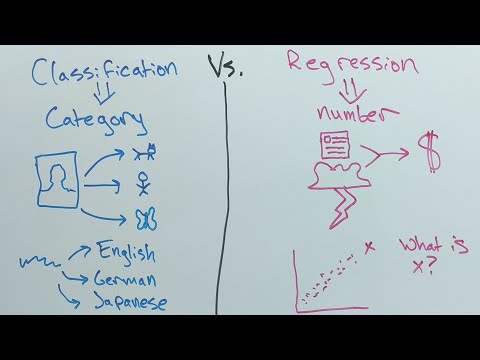

- Classification Trees: These are used when you want to sort things into categories. Think of deciding what type of game character you want to be: warrior, mage or rogue? Each question narrows down the options until you’ve classified yourself as one.

- Regression Trees: These are more about predicting numerical values. For example, if you wanted to guess how much points you’ll score based on your character skills in that same game, regression trees would help make those predictions.

How Do They Work?

Here’s where it gets interesting. The process involves splitting your data into subsets based on certain features that matter most for predicting an outcome—this is called “splitting.” The best splits are chosen by looking at things like purity (how mixed the data is after the split).

For example, if you’re trying to predict whether someone will buy a video game based on their age and income level:

– At the first split, you might ask if they’re under 25.

– If yes, it leads one way; if no, another.

– Then maybe look at income next—those who can spend more might be led down different branches.

Applications of CART

CART can be applied in various fields like healthcare for diagnosing diseases or in finance for credit scoring. Here’s how it plays out in real life:

- Healthcare: Doctors can use classification trees to diagnose conditions based on patient symptoms.

- Marketing: Companies may utilize them for customer segmentation by analyzing buying patterns.

- Sports Analytics: Imagine using CART with player stats and historical performance to predict future game outcomes!

The Advantages & Disadvantages

Alright, let’s break this down. There are clear pros and cons.

- Advantages:

- Simplicity: Easy to understand even for non-techy folks.

- No need for heavy math; just logical decisions!

- You can visualize what decisions led to your outcomes with clarity.

- Disadvantages:

- A tendency to overfit: If not careful, trees can become too tailored to specific data points which might not generalize well later on.

- Sensitivity: Changes in the data might lead to completely different tree structures.

So remember this isn’t a replacement for professional advice when making serious decisions. Instead, think of CART as more of a fun tool that helps organize thoughts and make educated guesses based on patterns from past data!

And there you have it—a friendly guide through the jungle of Classification and Regression Trees!

Comprehensive Guide to Classification and Regression Trees: Applications, Techniques, and PDF Resources

I’m really glad you’re interested in Classification and Regression Trees, or CART for short. Let’s break it down in a super friendly way, yeah?

What are Classification and Regression Trees?

CART is a method used in data mining and statistics for making predictions. It creates a model that predicts a target variable (like whether someone will buy a product) based on various input features (like age, income, or previous purchases). The tree structure makes it easy to visualize how decisions are made.

Classification vs. Regression

Now, there’s a bit of a difference here. Classification trees are used when your output is a category. For example, think about deciding which character you’d like to play in a game based on your preferences: do you want to be agile or strong? The decision tree can help categorize that! On the other hand, regression trees predict continuous outcomes — like how many points you’ll score based on your past performance.

How Does it Work?

The tree itself is built through splitting the dataset into subsets based on the value of different features. Each split tries to create more homogenous groups regarding the outcome variable.

- Splitting: Imagine starting with all players in a game and dividing them based on skill level – beginners vs. experts.

- Decision Nodes: Each node represents a feature used for splitting – maybe «Is your character ranged or melee?»

- Leaf Nodes: These show the final outcome or prediction – “You’re likely to win!”

The Process Step by Step:

In simple terms, here’s how you’d build one of these trees:

1. **Choose the best feature** according to some criterion (like Gini impurity for classification tasks).

2. **Split the data** based on this feature.

3. **Repeat** until you reach some stopping criteria (like when you have too few samples left).

Bold choices lead to bold results!

Applications of CART

There are so many areas where CART shines:

- Healthcare: Predicting patient outcomes based on various health metrics.

- E-commerce: Classifying customers for targeted advertising.

- Biosciences: Analyzing biological responses under different conditions.

When it comes to real-life examples, think about playing FIFA. The game analyzes your past performance: goals scored, assists made — all feedback into determining which tactics might work better for you next time!

Pitfalls to Watch Out For

Even though CART is fantastic, there’re some things to be mindful of:

– **Overfitting:** Your tree might become overly complex and start capturing noise instead of patterns.

– **Bias:** If not enough data is available or it’s imbalanced, it may not represent reality well.

So yeah, don’t just throw data at it without thinking – make sure you’ve got enough quality info!

Pete Resources,

If you’re eager to learn more about classification and regression trees outside this chat – which undoubtedly isn’t professional advice but rather friendly sharing – there’re tons of PDFs out there with detailed information! You could check out academic sites or look through online educational platforms; they usually have great materials.

In summary? CART can be an awesome tool for predictions across various fields if approached thoughtfully! So go ahead and explore its depths! Just remember: if you’re dealing with serious issues or need in-depth analysis – it’s always best to talk with someone who’s professionally trained in these matters!

Understanding Classification and Regression Trees (CART) in Data Analysis and Decision-Making

Sure! Let’s break down Classification and Regression Trees, or CART, in a way that’s easy to grasp.

CART is a popular method used in data analysis that helps with decision-making. It’s like having a helpful guide that breaks down complex data into simpler parts. You can use it for both classification (which groups things) and regression (which predicts numbers).

Here’s how it works:

- Structure: Imagine a tree. At the top is the trunk, which represents your main question or goal. As you move down, branches split off into more specific questions based on the answers you get. Each split helps you narrow down options.

- Node Decisions: Each point where the tree splits is called a node. A node will typically involve yes/no questions that help decide which way to go next. For example, if you’re trying to predict whether someone likes soccer based on age and gender, one question could be: “Is the person under 18?”

- Leaves: The end points of this tree are called leaves. They provide the final classification or prediction based on the previous answers in the path taken down the tree.

Let’s say you’re trying to decide what game to play with friends based on everyone’s preferences. Your trunk might be “What genre do we prefer?” Branching out from there could be “Action,” “Puzzle,” or “Adventure.” If you choose Action and ask if anyone has played an action game recently, that leads you closer to picking something everyone enjoys!

In terms of applications, CART can help businesses make decisions about marketing strategies or customer preferences by analyzing past data and predicting future behaviors.

So what are its major benefits?

- Easy Interpretation: The tree structure means it’s visual and straightforward. You don’t need fancy math skills; just follow the branches!

- No Assumptions: Unlike some models, CART doesn’t assume that relationships between variables are linear, meaning it can handle complex patterns.

- Handling Missing Data: It’s pretty good at dealing with missing values too! If some information is missing about a customer, it can still make predictions without throwing everything off.

But remember! Sometimes this technique can lead to overfitting—where your model works great on training data but struggles with new information because it became too tailored to specific examples instead of general trends.

In short: CART is like having a roadmap for making decisions based on data—it breaks things down step by step! When you’re sifting through lots of info and trying to decide what’s best for your group activity or project, just think how much easier it gets with a clear visual structure guiding your choices!

And hey—you should definitely consult with a professional if you’re dealing with serious decisions based on data analysis, because they’ll have insights beyond just crunching numbers.

Okay, so let’s chat about classification and regression trees, or CART for short. It sounds super technical, but really, it’s all about breaking things down in a way that makes sense. Picture this: you’re at a party, and you’re trying to figure out whether to join the dance floor or hit the snack table. You might ask yourself a series of questions—like “Am I hungry?” or “Do I feel like dancing?”—until you decide. That’s kinda how CART works!

So it starts with a big decision tree. You have your question—let’s say it’s whether someone will buy a car based on factors like age, income, and preferences. From there, you get to ask more specific questions based on their answers until you reach a conclusion—like yes or no. It’s almost like being a detective piecing together clues.

Now imagine your friend Sarah is deciding whether to go back to school. She thinks about her job satisfaction, her financial situation, and her passion for learning. Each choice leads her down a different path in life—just like how each answer in our tree leads us closer to an answer.

The cool thing about classification trees is that they help with categorizing data into distinct classes; think of it as sorting your laundry! On the other hand, regression trees are about predicting outcomes based on input variables—maybe predicting how much money Sarah could earn after finishing her degree.

I remember when my cousin decided to buy his first house. He was all over the place trying to weigh options—you know? How many bedrooms? What location? What can he afford? Eventually, he used some kind of decision-making technique that reminded me of CART because he listed everything out logically until he found his dream home.

In practice, you can find these trees everywhere—from healthcare predicting patient outcomes to businesses forecasting sales trends. They make complex decisions more digestible by showing every possible route of questioning along the way!

But hey! Here’s the catch: while these trees can be super helpful for explaining stuff visually and making sense of data patterns, they can sometimes get too complicated or overfit if you have too many branches going everywhere—imagine growing tangled vines instead of a neat tree! So keeping things manageable is key.

All in all, CART is just one part of this bigger picture we call data science that helps us make informed choices without losing ourselves in data chaos. Isn’t it wild how we can take these simple concepts and apply them in such different areas? Life’s all about finding clarity amid confusion—and that’s what classification and regression trees do best!